- by foxnews

- 08 Apr 2025

Apple to roll out child safety feature that scans messages for nudity to UK iPhones

Apple to roll out child safety feature that scans messages for nudity to UK iPhones

- by theguardian

- 22 Apr 2022

- in technology

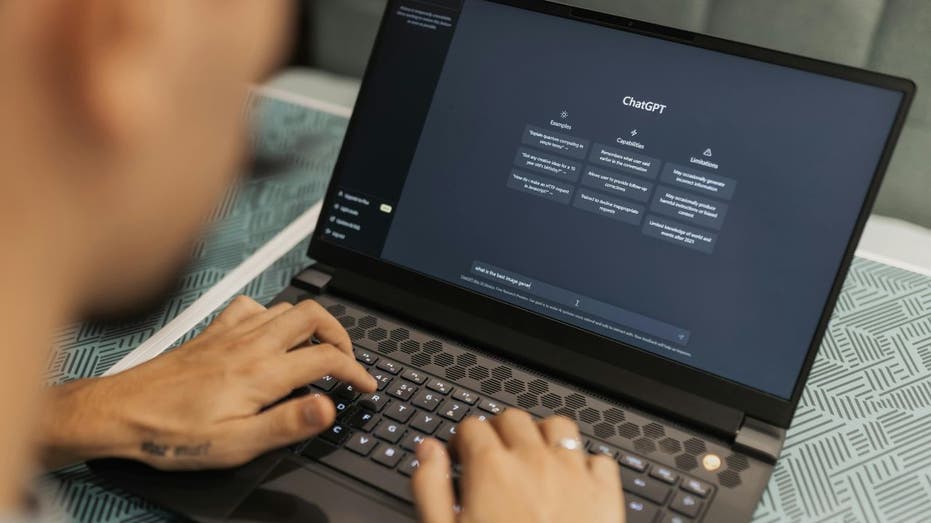

A safety feature that uses AI technology to scan messages sent to and from children will soon hit British iPhones, Apple has announced.

Apple has also dropped several controversial options from the update before release. In its initial announcement of its plans, the company suggested that parents would be automatically alerted if young children, under 13, sent or received such images; in the final release, those alerts are nowhere to be found.

The company is also introducing a set of features intended to intervene when content related to child exploitation is searched for in Spotlight, Siri or Safari.

As originally announced in summer 2021, the communication safety in Messages and the search warnings were part of a trio of features intended to arrive that autumn alongside iOS 15. The third of those features, which would scan photos before they were uploaded to iCloud and report any that matched known child sexual exploitation imagery, proved extremely contentious, and Apple delayed the launch of all three while it negotiated with privacy and child safety groups.

- by foxnews

- descember 09, 2016

Ancient settlement reveals remains of 1,800-year-old dog, baffling experts: 'Preserved quite well'

Archaeologists have recently unearthed the remarkably well-preserved remains of a dog from ancient Rome, shedding light on the widespread practice of ritual sacrifice in antiquity.

read more